There hasn’t been much visually to show of my latest developments despite constant progress.

I showed my game on the TIGSource forums where I accidentally posted a scaled image of Dungeon Trap, however, many people seemed to like the “Ghetto Blur” as it was referred to that came from the scaling. I began playing around with some methods to achieve something more intentional, reminiscent of a really misaligned old monitor.

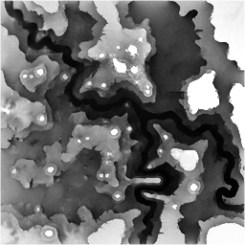

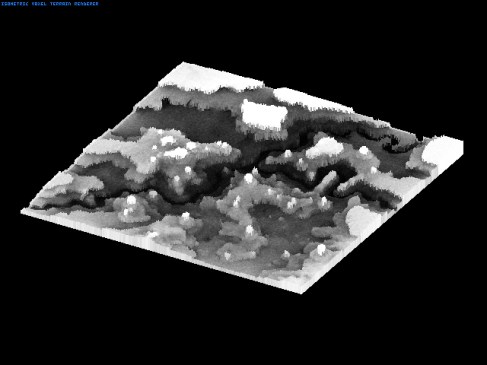

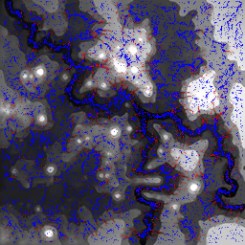

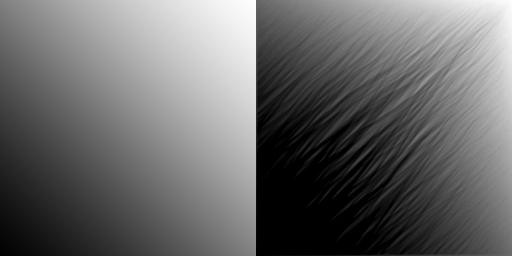

My preferred method of rendering in the Tengu engine is to draw the game to an off screen buffer at 1:1 size, and then perform a very fast scaled Blit to the screen to blow this up to 2x to 4x the size. At this stage I could also apply a dirty filter to mess with the colours, effectively offsetting each of the colour channels a little. A mockup of this effect can be seen below:

Also I realized that I really want to commit to using procedural art generation in the level creation process. The basis of each level is really just the position of the ladders, floor tiles and dynamic entities. If you look at the mockup from the previous entry you can see that most of the fluff in the background is a regular pattern, distributed semi randomly, tending to hug the ladders and floor tiles. This could be generated procedurally instead of the normal method of creating a limited set of pre-made tiles that are to be placed by hand during the levels creation. The same applies to the pattern inside of the floor tiles, that is also a good candidate for being generated procedurally. Eventually I want to be in a stage where I can just place two tiles, one to signify floor position and one to signify a ladder, then at the press of a button I can have the procedural algorithms kick in to generate all the required tiles to form a unique and complete map. All that would be left for the designer is to place the entities and finally script the level.

On the subject of scripting, I have spent one hell of a lot of time designing a really unique and what I hope will be a fun scripting system that was born from my studies of digital logic design. My plan is something like this; every entity in the world can have a variable number of virtual inputs and outputs, inputs being things that will effect the entity, and outputs being products of the entities internal state. The signals that these inputs and outputs process will be purely binary however, just high and low states. For instance a lever in the game will output HIGH when it is pulled to the right, and LOW when it is pulled to the left. It could be linked to the input of a door which will open when that input is HIGH and close when that input is LOW. With one link, the lever now control the door in a natural way. With the addition of standard logic gates, some very complex interactions can be scripted between entities. The beauty is that digital logic at a basic level can be extremely intuitive and a bonus would be that no script programming would required, however the complexity of the scripted events could be pushed as far as you want; Theoretically, an entire computer could be simulated from a digital logic design in the level.

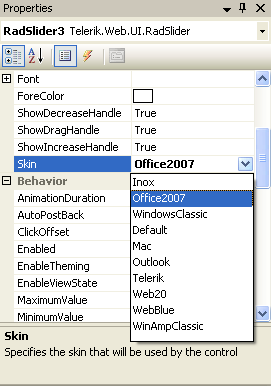

For this to work however the scripts must be easy to make and edit, so I have been working on a visual editor as an extension to the level editor. Here is the visual design for each of the in build logic gates for the scripting system, left side shows the inputs and right side the outputs.

These two areas will be my main focus for the foreseeable future, the level editor and the scripting system. Other minor plans are to integrate the level editor as part of the game itself, allowing fast design and play test turn around, and to encourage user level creation. One of my goals is to allow entire levels to be output as small encoded text strings that could be shared on any website or by any standard means. The only other game I know that had this feature was N, by metanet software.